What is edge AI?

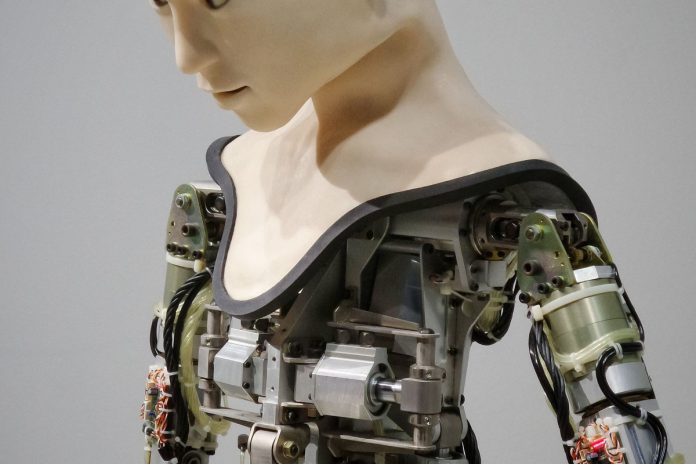

Utilizing edge AI, companies are now able to create efficient Artificial Intelligence workflows that encompass both the cloud and devices located outside of the cloud. This entails machines close to humans and physical objects that can facilitate important tasks in a faster manner when compared to centralized data centers.

This is a dramatic deviation from the prevalent practice of crafting AI algorithms in the cloud and deploying them on desktops or specific hardware for tasks like reading check numbers. Cloud AI has become so popular that people have begun to call it by this name in recent times. Furthermore, older techniques of developing AI applications are now being substituted with more cutting-edge approaches.

Far too often, the edge is defined as a tangible object – like a gateway, router or 5G cell tower. Still, we mustn’t forget that AI at the edge has an immense capacity to enhance items such as smartphones and driverless vehicles alike. Edge AI truly brings these devices to life!

To better comprehend the significance of edge technology, consider it as a way to expand digital transformation strategies that have been created in the cloud. Additionally, various forms of edge innovation have been used to enhance the efficiency and performance of PCs, smartphones, vehicles, appliances and other gadgets that make use of cloud-based best practices. Moreover, Edge AI is focused on architectures and processes for amplifying data science, machine learning and AI outside the cloud environment.

How edge AI works

In the past, most artificial intelligence programs were established utilizing symbolic AI techniques that forced regulations into applications; for example expert systems or fraud detection algorithms. Occasionally, nonsymbolic AI approaches such as neural networks could be utilized to create an application like optical character recognition of check numbers or typed text.

After long and extensive study, scientists have figured out how to expand deep neural networks in the cloud so they can be used for training AI models and generating answers based on input information, a process known as inferencing. Edge AI takes this development even further by sending it beyond what’s available from the cloud. Generally speaking, edge AI is employed for inferencing duties while cloud-based AI handles teaching of new algorithms.

Inferencing algorithms are much less resource-demanding and energy consuming compared to training algorithms. Such efficient functioning makes it possible for inferencing tasks to be run on ordinary CPUs or even low-performance microcontrollers in edge devices. For more demanding jobs, AI chips that improve performance while reducing power consumption can also be utilized.

Benefits of edge AI

Edge AI offers numerous advantages when compared to cloud AI, namely:

- With inferencing performed locally, latency is significantly decreased and speeds are dramatically increased – no more waiting for a response from the cloud!

- Edge AI drastically cuts down the amount of bandwidth needed and accompanying cost for transferring audio, video, and high-precision sensor information over cellular networks.

- By processing data locally, you can ensure that your sensitive information is not stored in the cloud or intercepted during transit. This increases security and protects your confidential data from potential risks.

- Utilizing improved reliability and autonomous technology, Artificial Intelligence is able to remain functioning even when the network or cloud service becomes inoperable. This powerful capability is especially essential for applications such as self-driving cars and industrial robots that require uninterrupted operation.

- AI tasks can be executed very efficiently on-device, preserving battery life and cutting down power consumption significantly.

Edge AI use cases and industry examples

Edge AI leverages the strength of cloud computing and current operation to maximize the efficacy of its algorithms. It finds applications in several areas, including speech recognition, fingerprint detection, face-ID authentication systems, fraud countermeasures, and autonomous vehicles. With edge AI on board your product or service offering will evolve rapidly over time – chances are you don’t want to miss out!

As an example, consider the implementation of a self-navigating system where AI algorithms are trained in the cloud, while inferencing is done on-board to control and manage steering, acceleration and brakes. Data scientists can develop improved autonomous driving models inside the cloud, which can be directly sent to all vehicles that require it.

In some vehicles, Artificial Intelligence (AI) systems mimic the car’s handling even when a human is driving. If the AI system detects an unexpected action from the driver, it records and uploads relevant video to its cloud-based database in order to improve its algorithm. This main control program is then enhanced by pooled data from all of its fleet cars with each successive update.

You may be wondering how edge AI can come to life. Here are a few examples of what that could look like:

- Algorithms designed for speech recognition are able to translate spoken language into text on mobile devices.

- Google’s cutting-edge AI technology can create realistic backgrounds to take the place of individuals or objects deleted from a photo.

- AI-powered cloud models are used to locally measure heart rate, blood pressure, glucose levels and breathing with the help of wearable health monitors.

- By slowly studying a more effective technique of picking up a certain type of package, the robotic arm can improve its own performance and subsequently transmit this knowledge to other robots through the cloud.

- Amazon Go has revolutionized the shopping experience by utilizing edge AI and cloud technology to provide a cashier-less, self checkout feature. This cutting-edge service accurately counts items placed in the shopper’s bag without having to go through any additional steps.

- Intelligent traffic cameras expertly adjust light timings to ensure the most efficient flow of vehicles.

Edge AI vs. cloud AI

Tracing the trajectory of edge AI and cloud AI can be bewildering, so let us start at their beginning. Mainframes, desktops, smartphones, and embedded systems came long before anything related to a ‘cloud’ or an ‘edge.’ Their applications advanced bit by bit through painstakingly slow Waterfall development practices that had teams cramming as much functionality and testing into yearly updates.

The cloud revolutionized how data centers could harness automation, thus allowing teams to embrace Agile development techniques. Nowadays, some large cloud applications are updated as often as dozens of times per day. These more granular updates make it feasible for developers to implement application functions in smaller pieces. Meanwhile, the concept of edge computing provides a way to extend these modular development approaches further than just the cloud – all the way down to individual devices like phones, appliances and autonomous vehicles! This is what’s known as Edge AI: an extended workflow that lets us leverage Artificial Intelligence on-device or at remote edge locations with ease.

Edge AI devices come in a variety of forms and capacities. For instance, even the most basic smart speaker sends all audio data to the cloud for processing; yet deeper-level edge AI technologies, like 5G access servers, have larger capabilities that extend beyond personal use and into local areas. The LF Edge group from Linux Foundation examines light bulbs, cell phones, on-premises computers as well as smaller regional data centers—each with varying levels of edge device technology.

Edge AI and cloud AI form a cooperative relationship with the edge device, contributing to both training and inferencing. On one side of the equation, data is sent from an edge device to the cloud for inference; on another end, local computation can run models trained in the cloud right at the source. And in between these two approaches lies more substantial involvement on behalf of Edge AI – such as taking part in larger roles while helping train Machine Learning algorithms.

The future of edge technology

Edge AI is an area of rapid growth and expansion; the LF Edge group estimates that by 2028, power consumption for edge devices will have increased 40-fold to 40 GW. At present, consumer products such as smartphones, wearables and appliances are responsible for much of this usage. However, enterprise applications are set to become increasingly prominent in areas including cashier-less checkout systems; smart hospitals; intelligent cities; industry 4.0 operations and supply chain automation.

The emerging AI technology of federated deep learning could revolutionize the privacy and security of edge AI. Instead of pushing a subset of raw data from each device to the cloud for training, as is common in traditional AI, federated learning enables devices to make their own local updates that are pushed into the cloud rather than actual data which reduces potential threats to privacy. In addition, improved orchestration at an enhanced level will be available on edge devices due to this new technology.

Presently, the majority of edge AI algorithms are executing local inferencing directly against data visible to the device. In time, more complex tools might carry out local inferencing using information from a set of sensors near the apparatus.

In comparison to the well-established DevOps practices for creating applications, development and operations of AI models are still in their infancy. Data scientists and engineers face various data management issues as they strive to manage edge AI workflows which involve orchestrating diverse processes – from data collection on edge devices through modeling deployment into the cloud.

As technology continues to progress, tools designed for these processes are likely to be upgraded as well. This will make it feasible to scale edge AI applications and develop novel AI architectures. For instance, specialists have already begun testing ways of incorporating edge AI into 5G data centers that are in closer proximity to mobile and IoT devices. Additionally, fresh tools could grant businesses the opportunity investigate unique federated data warehouses with information stored nearer the edge too!